When Artificial Intelligence Redesigns the Economy: What Is My Responsibility?

This article was written by Guy TANTCHOU

Guy TANTCHOU

Expert in structural mechanics and pioneer in the applications of AI in packaging.

The printing press democratized knowledge. The steam engine triggered the Industrial Revolution. Electricity redefined modes of production and daily life. The automobile reshaped trade and urban planning. Finally, the internet abolished distances and connected markets in real time. Each of these inventions revolutionized its era, but each was limited to a specific domain or primary use. Artificial intelligence, however, stands apart radically: it is not a specialized tool but a technology that seeks to optimize how we interact with the world around us. For this reason, it can be applied to all sectors simultaneously. Many consider it the greatest human invention of all time.

Artificial intelligence, however, stands apart radically: it is not a specialized tool but a technology that seeks to optimize how we interact with the world around us.

And this invention is still in its early stages. Today, AI infiltrates every sphere of the economy at a remarkable speed. It optimizes supply chains, assists doctors in their diagnoses, writes content, pilots vehicles, manages financial portfolios, and personalizes the experience of billions of consumers. Companies that adopt it gain in productivity and competitiveness; those that ignore it risk disappearing. This imbalance makes any backward step economically impossible: the efficiency gains are too significant for a rational actor to voluntarily forgo them. But this massive integration also raises considerable dangers. AI amplifies the ability to produce disinformation and propaganda on a large scale. It deepens inequalities between those who master the technology and those who suffer its consequences. It threatens individual freedoms through surveillance, profiling, and the exploitation of personal data. As our dependence on these systems grows, a philosophical question arises: Are we still free in our choices when they are increasingly guided, filtered, or suggested by algorithms?

Companies that adopt it gain in productivity and competitiveness; those that ignore it risk disappearing.

This question is legitimate, but it should not lead us to resignation. To answer it lucidly, we must first understand what AI is—and, above all, what it is not. AI operates according to a probabilistic logic: it detects patterns in data, calculates correlations, and optimizes results based on measurable criteria. In other words, it excels where a problem is clearly defined and performance can be quantified. But it is precisely this nature that sets its fundamental limit: AI has no consciousness. It feels no empathy, doubt, or moral sense. Therefore, it is structurally incapable of addressing situations where maximizing profit or statistical reward is not the main challenge, such as social justice, dignity, the ethics of care, or human connection. AI does not perform magic: faced with a poorly defined, ambiguous, or deeply human problem, it will produce a statistically plausible response, but not necessarily a relevant one. This is where the most common mistake lies today: comparing ourselves to the machine or expecting from it what it cannot deliver. Defeatism and systematic criticism often arise from this confusion. However, if we accept that AI has intrinsic limits, a conclusion imposes itself: the entire technological and economic ecosystem built around it cannot survive without the human equation. It is people who train the models, correct their biases, frame their uses, and adapt them to varied cultural and social contexts. The development of AI, today and tomorrow, will continue to depend directly on the expectations that each individual, company, and society places in it—because these expectations guide investments, define research priorities, and shape the resulting products.

I operates according to a probabilistic logic: it detects patterns in data, calculates correlations, and optimizes results based on measurable criteria.

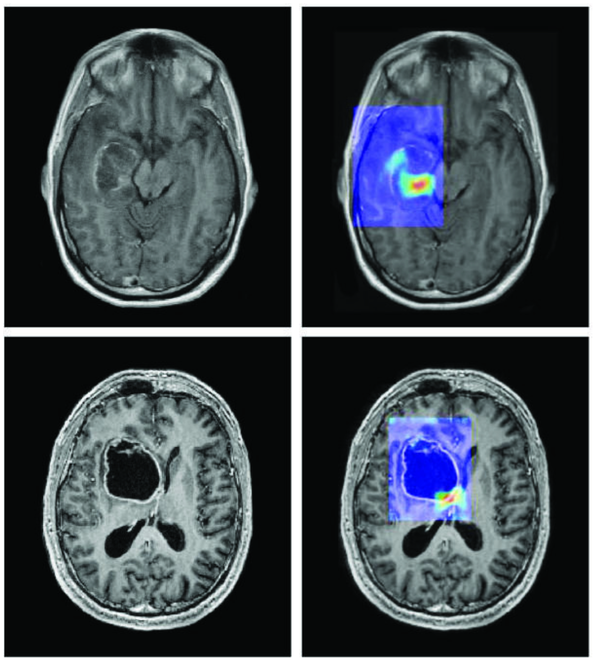

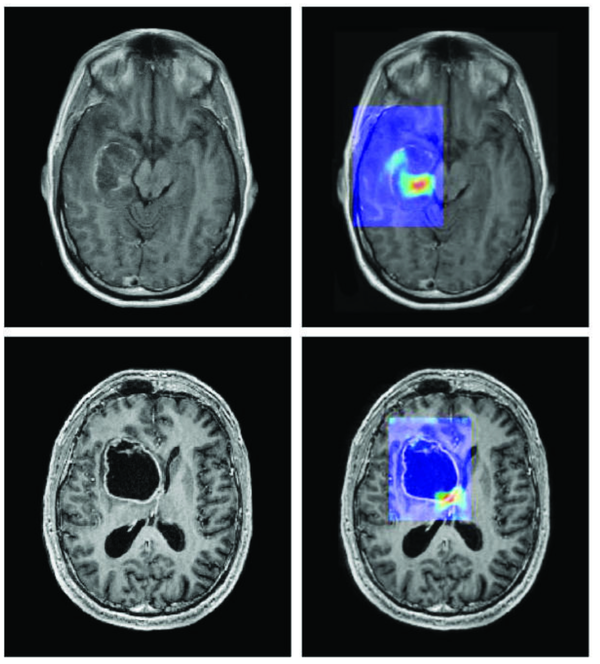

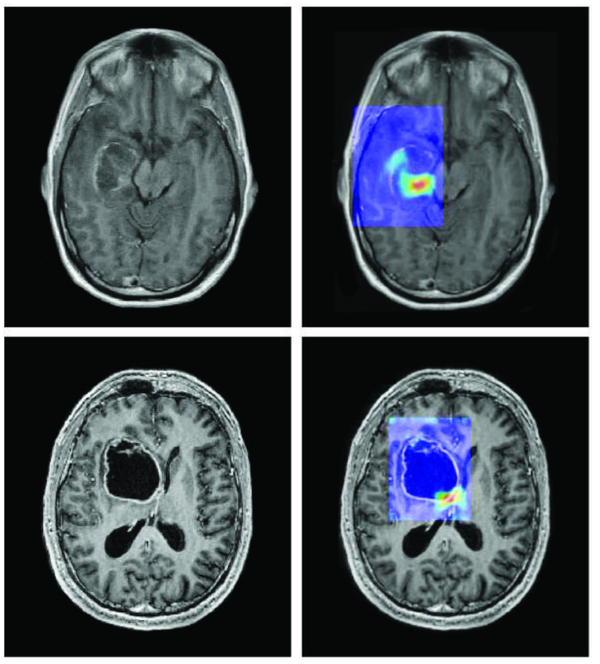

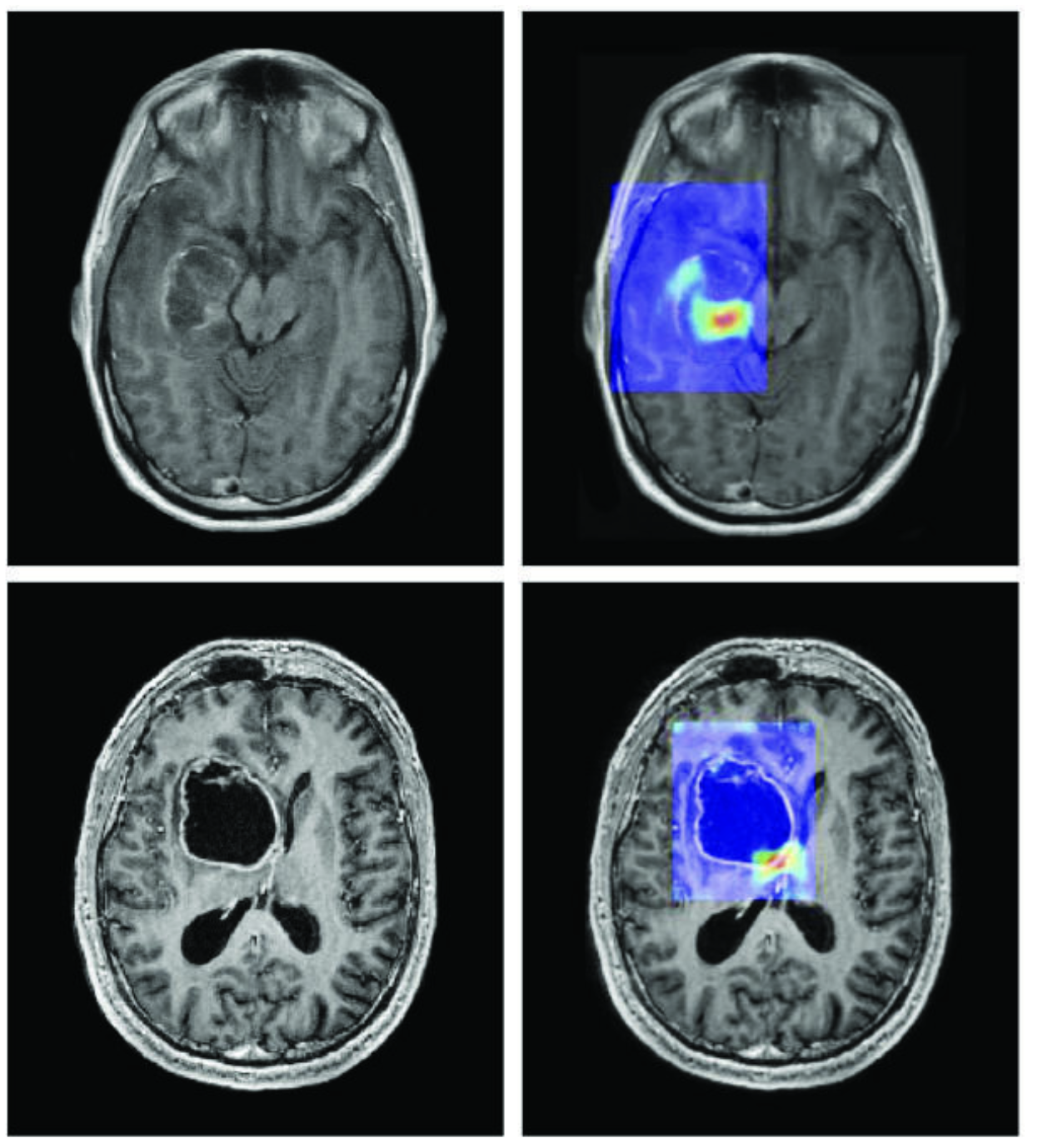

Three concrete situations already illustrate this effective coexistence between humans and AI. In medicine, AI systems analyze thousands of X-rays in seconds to detect early signs of cancer [1]; but it is the doctor who interprets the patient's clinical context, engages in dialogue with them, and decides on the appropriate treatment protocol: AI accelerates diagnosis, but the human bears the responsibility and meaning. In industry, car manufacturers use AI to control quality on production lines [2], identifying defects invisible to the naked eye; yet it is the engineers who design the processes, adjust tolerance thresholds, and make decisions when a problem falls outside the algorithm’s predicted framework. Finally, in scientific research, AI developed by DeepMind has enabled the prediction of the three-dimensional structure of over two hundred million proteins [3], a task that would have taken centuries for researchers alone; but it is biologists and chemists who formulate hypotheses, design experiments, and transform these predictions into treatments or new materials. In each of these cases, the lesson is the same: AI does not replace humans; it multiplies their capacity for action.

AI does not replace humans; it multiplies their capacity for action.

What should we remember from all this?

AI is the most powerful invention, but also the most demanding of those who use it. It does not think for us, guess our needs, or correct problems we cannot articulate. Debating for or against AI, fearing it or idolizing it, is already missing the point. The real battle—for each of us—is about how to use it to solve concrete problems: speeding up a diagnosis, improving a process, advancing research, or making a more informed decision. This requires learning to ask the right questions before expecting the right answers, investing in training rather than fascination, and keeping in mind that a tool, no matter how revolutionary, is only as valuable as the intention of the person wielding it. AI is a lever, not a destination. It is up to us to decide where it takes us.

[1] Can Artificial Intelligence Help See Cancer in New, and Better, Ways?

[2] Artificial intelligence as a quality booster

[3] ‘The entire protein universe’: AI predicts shape of nearly every known protein